Looking back at the journey that began in August 2024, this has been a deep exploration of system architecture transformation and technical evolution. In an era where AI-generated code is increasingly accessible, I've become more convinced than ever that connecting complex systems, understanding hardware characteristics, and establishing order within data flows remain the irreplaceable core of what engineers do.

I. Building Stability in a Complex Environment

For the past period, I worked at a tech company on Xingshan Road in Neihu, contributing to the development of a hardware ecosystem control platform. This experience gave me an in-depth understanding of the complexity behind hardware data acquisition.

Together with my teammates, we advanced the implementation of O11y (Observability). Under the guidance of a senior QA colleague, we embedded quality assurance thinking into our monitoring metrics, transforming our approach from reactive firefighting to proactive alerting. We followed Log4cxx standards to establish strict logging levels, turning cross-layer debugging between hardware and software from vague guesswork into data-driven analysis.

Working with multiple PMs across different project phases sharpened my ability to maintain architectural consistency under constantly shifting requirements. The biggest takeaway from this experience was learning how to find balance between the unpredictability of hardware and the determinism of software.

II. From Frontend to Backend: Building Full-Stack Capabilities

After years of deep specialization in frontend development, I gradually realized that solving complex problems requires going beyond the presentation layer. This drove me to systematically invest in backend development, choosing Django as my primary backend framework.

The choice of Django was pragmatic: its mature ORM efficiently handles complex relational data models, the built-in Admin and Auth systems drastically reduce the cost of building management interfaces, and Django REST Framework makes API design and documentation systematic. In real projects, I dived deep into core backend challenges — N+1 query optimization (achieving 93% performance improvement through prefetch_related / select_related), transaction atomicity control, and Decimal precision handling for financial calculations.

Paired with PostgreSQL as the primary database, I also built hands-on experience in schema design, indexing strategies, and migration management. This path from frontend to backend gave me a solid understanding of the complete lifecycle — from browser request to database query and back.

III. Deep Architecture Exploration Through Side Projects

Through the practice of hardware data integration and backend development, I developed a strong interest in edge computing and data value. On my self-built Ubuntu dual-GPU (RTX 3090) server, I independently completed the following architecture explorations.

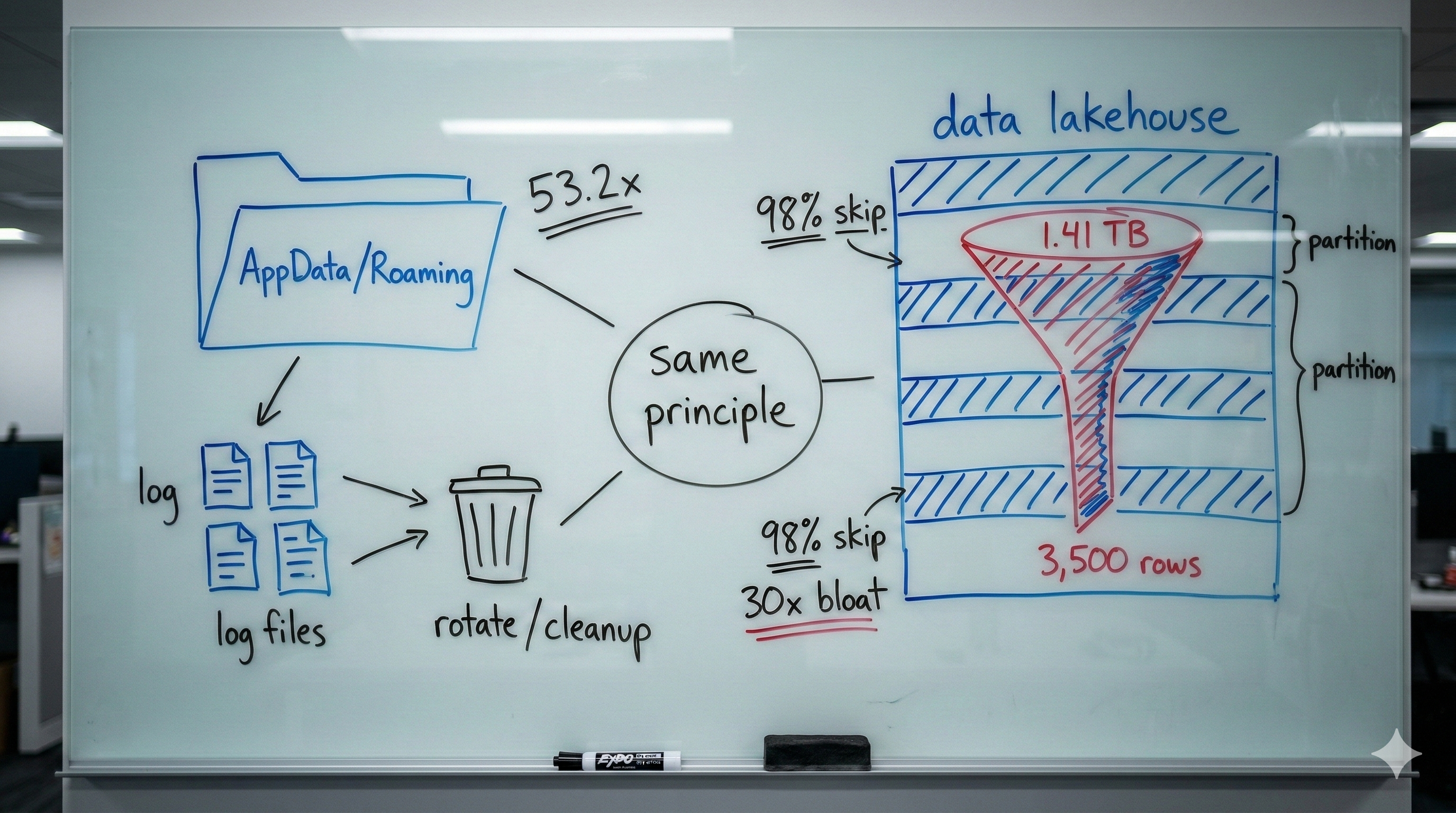

1. Glucose AI Lakehouse: Compliance Meets Agility for Medical Data

This is a Data Lakehouse proof-of-concept designed to address the conflict between audit requirements and analytics speed in digital healthcare:

The storage layer uses Apache Iceberg to ensure data immutability and Time Travel queries, while the computation layer leverages Trino for high-performance federated queries — meeting the strict audit requirements of ISO 62304. For the data pipeline, I used Polars to build an ETL process for high-frequency glucose data, simulating sensor input and performing trend analysis.

This project taught me that in healthcare scenarios, data traceability and query performance shouldn't be an either/or proposition.

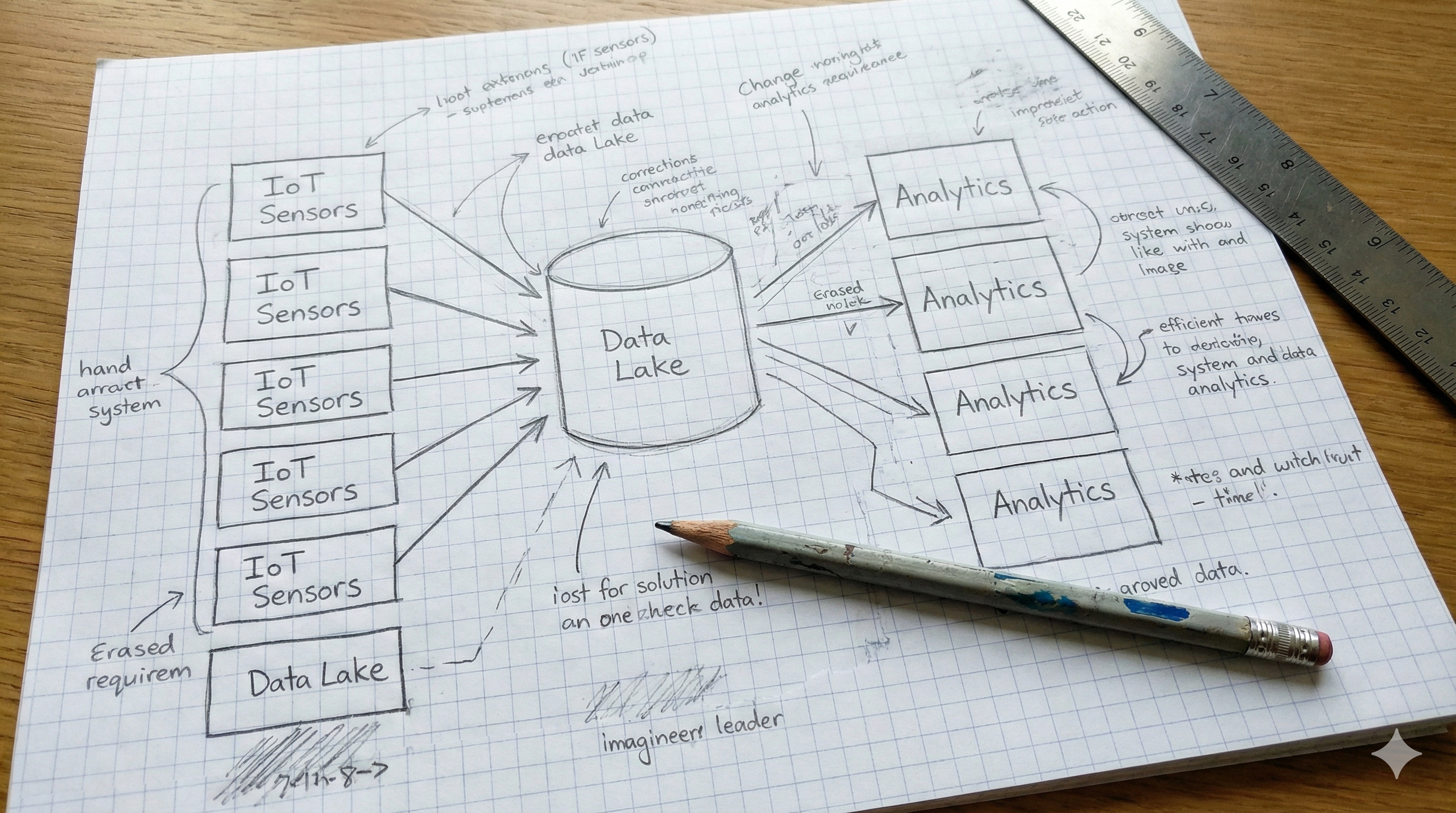

2. High-Performance GPS Analysis Engine: Large-Scale Spatial Computing

To solve the OOM (Out of Memory) issues common in traditional backends when processing large volumes of geospatial data, I shifted the architecture toward a big data processing paradigm. Leveraging distributed computing capabilities for large-scale trajectory clustering and analysis — this was my pivotal transition from frontend state management thinking to backend distributed systems thinking.

3. Pangcah-Accounting: Architecture Migration from Next.js to Django

This project went through a complete architecture evolution. Phase 1 used a Next.js full-stack architecture to rapidly validate product logic, but hit performance bottlenecks in API Routes under complex financial calculation scenarios (aggregation queries exceeding 300ms, beyond SLA thresholds).

After systematic technical evaluation, Phase 2 executed a complete architecture migration: frontend moved to React 18, backend migrated to Django 5.0 + Django REST Framework, with PostgreSQL as the database. Post-migration results showed 60% performance improvement in critical endpoints, 80% improvement in complex query performance, and zero-downtime deployment achieved through blue-green deployment strategy.

I'm currently developing a desktop version using Tauri, designed to provide a more elder-friendly interface for Taiwan's indigenous Pangcah (Amis) community. This project confirmed my belief that the ultimate value of technology lies in serving people.

Closing

What I treasure most from these 550+ days is the trust and professional exchange along the way. Thank you to every teammate I had the privilege of working with.

I move forward with frontend sensitivity, hands-on Django backend experience, a full-stack architectural perspective, and maturing data engineering capabilities — ready for the next challenge at the intersection of data and system integration.