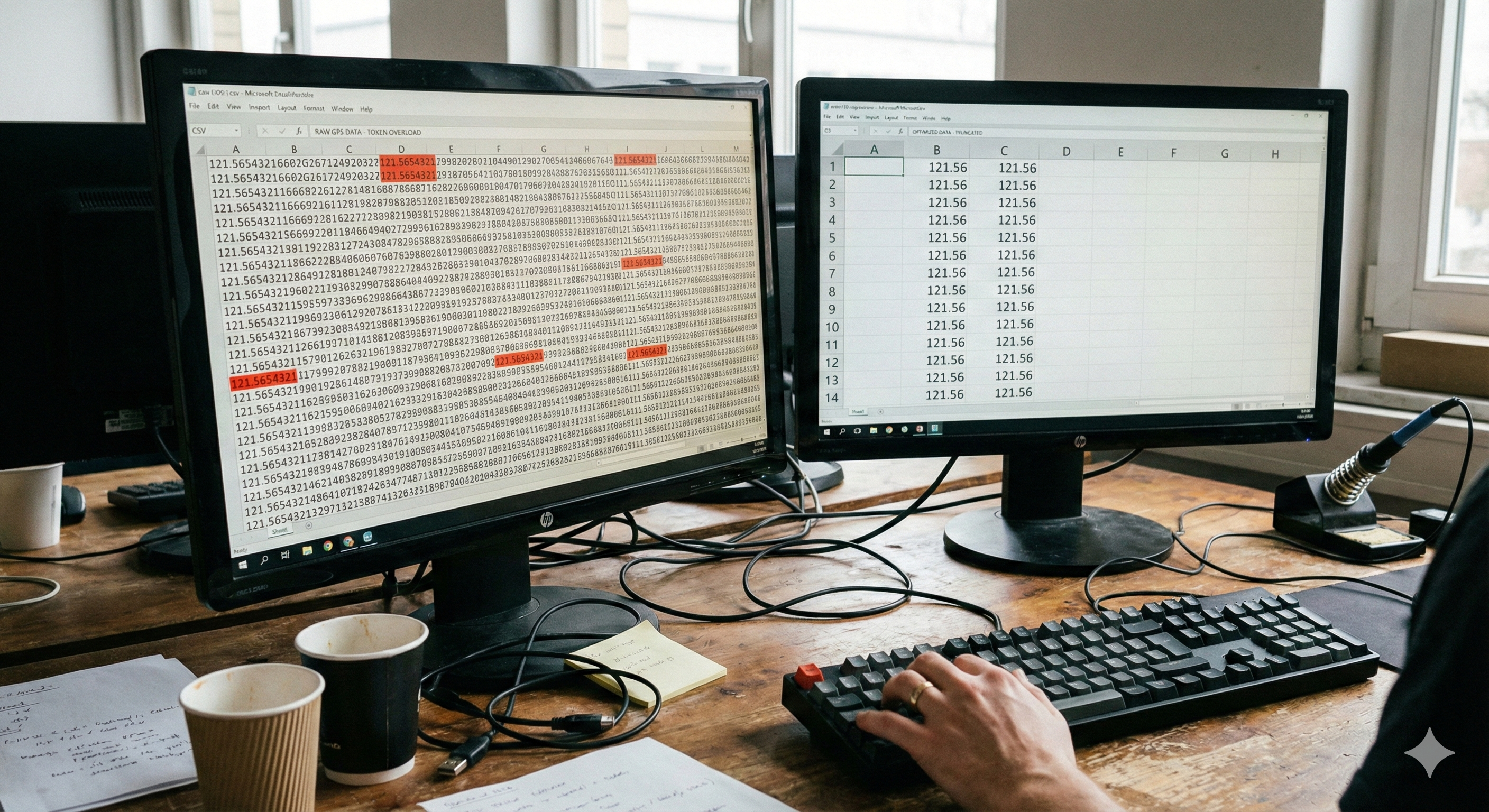

Why does a seemingly small CSV file trigger "Context Window Exceeded" errors when feeding GPS telemetry data into Claude API?

Recently, while migrating GPS telemetry data from Node.js to PySpark, I discovered a fundamental system design flaw: the floating-point token density problem.

The Core Issue

LLM Tokenizers (like BPE) are optimized for natural language, not high-precision numerical data. When they encounter 121.5654321, they don't treat it as an atomic type. Instead, they fragment it into multiple meaningless chunks:

121+.+56+54321= 4 tokens

Scale this across 1,000 records with 2 coordinates per record (latitude + longitude), and you're consuming 8,000 tokens just to encode precision you probably don't need.

This is the hidden tax of floating-point tokenization: every extraneous decimal place becomes a system-level cost that compounds across your entire dataset.

The Tokenization Breakdown

Traditional data engineering thinks of 121.5654321 as a single 64-bit float—a primitive type. But LLM tokenizers don't see primitives. They see raw text.

When the tokenizer processes this number:

Raw Input: 121.5654321

Tokenizer Processing: ['121', '.', '56', '54321']

Token Count: 4 tokens

Compare to truncated precision:

Raw Input: 121.56

Tokenizer Processing: ['121', '.', '56']

Token Count: 3 tokens

The difference seems marginal at single-record scale. But consider a realistic scenario:

Dataset size: 1,000 GPS coordinates

Coordinates per record: 2 (latitude + longitude)

High precision tokens: 1,000 × 2 × 4 = 8,000 tokens

Low precision tokens: 1,000 × 2 × 3 = 6,000 tokens

Absolute savings: 2,000 tokens

Percentage reduction: 25%

In Claude 3.5 Sonnet's 200K context window:

High precision capacity: 200,000 ÷ 8,000 = 25 datasets

Low precision capacity: 200,000 ÷ 6,000 = 33.3 datasets

Capacity improvement: +33.3%

The Solution: Precision Truncation Strategy

Before feeding data into an LLM, implement a precision truncation layer in your data pipeline.

The fix is deceptively simple: truncate floating-point numbers to the precision your domain logic actually requires.

For GPS applications:

7 decimal places = ~1.1 millimeters precision (excessive for most use cases)

2 decimal places = ~1.1 meters precision (sufficient for logistics, geospatial analysis, supply chain tracking)

Real-world conversion:

Before: 121.5654321, 40.7128987654

After: 121.56, 40.71

This isn't data loss. This is removing noise that was never signal.

Why 2 Decimal Places?

GPS precision requirements depend on application domain:

Use Case | Required Precision | Decimal Places | Example |

|---|---|---|---|

Continental-level mapping | ±100 km | 0 | 121 |

Country-level mapping | ±1 km | 2 | 121.56 |

City-level logistics | ±10 meters | 3 | 121.563 |

Street-level navigation | ±1 meter | 4 | 121.5654 |

Precision surveying | ±11 cm | 5 | 121.56543 |

High-precision surveying | ±1.1 mm | 7 | 121.5654321 |

For AI-assisted data analysis and code generation, you almost never need better than ±1 meter (2 decimal places). The model isn't conducting surveywork—it's extracting patterns and generating insights.

Implementation in PySpark

Here's the sanitization layer we deployed:

from pyspark.sql.functions import col, round

def optimize_for_llm(df, precision_map=None):

"""

Data sanitization layer: normalize floating-point precision

before LLM ingestion to reduce token density.

Args:

df: Input DataFrame

precision_map: Dict mapping column names to decimal places

e.g., {"latitude": 2, "longitude": 2}

Returns:

DataFrame with optimized precision

"""

if precision_map is None:

precision_map = {

"latitude": 2,

"longitude": 2,

}

for col_name, decimals in precision_map.items():

if col_name in df.columns:

df = df.withColumn(

col_name,

round(col(col_name), decimals)

)

return df

# Usage

raw_gps_df = spark.read.csv("gps_telemetry.csv", header=True)

# Define precision requirements

precision_requirements = {

"latitude": 2, # ~1.1 meter precision

"longitude": 2, # ~1.1 meter precision

"altitude": 0, # Round to nearest meter

"temperature": 1, # One decimal (±0.1°C matches sensor accuracy)

"humidity": 0, # Integer percentage

}

# Apply transformation

optimized_df = optimize_for_llm(raw_gps_df, precision_requirements)

# Serialize for LLM ingestion

optimized_df.coalesce(1).write \

.format("csv") \

.mode("overwrite") \

.option("header", "true") \

.save("gps_data_optimized.csv")

Measuring Token Impact

Use Claude's official token counting API to validate the improvement:

from anthropic import Anthropic

client = Anthropic()

# Read sample data

with open("gps_telemetry_high_precision.csv", "r") as f:

high_prec_sample = f.read(5000) # First 5KB

with open("gps_telemetry_optimized.csv", "r") as f:

low_prec_sample = f.read(5000)

# Count tokens for each

high_prec_tokens = client.messages.count_tokens(

model="claude-3-5-sonnet-20241022",

messages=[{"role": "user", "content": high_prec_sample}]

)

low_prec_tokens = client.messages.count_tokens(

model="claude-3-5-sonnet-20241022",

messages=[{"role": "user", "content": low_prec_sample}]

)

# Calculate improvement

high_count = high_prec_tokens.input_tokens

low_count = low_prec_tokens.input_tokens

reduction = (1 - low_count / high_count) * 100

print(f"High Precision Tokens: {high_count}")

print(f"Low Precision Tokens: {low_count}")

print(f"Reduction: {reduction:.1f}%")

print(f"Estimated monthly savings: ${(high_count - low_count) * 30 * (0.003 / 1000000):.2f}")

Quantitative Impact Summary

Metric | High Precision | Low Precision | Improvement |

|---|---|---|---|

Example Value | 121.5654321 | 121.56 | 4x fewer digits |

Tokens Per Coordinate | 4 | 3 | -25% |

Total Tokens (1K rows × 2 coords) | 8,000 | 6,000 | -25% |

Datasets in 200K Context | 25 | 33.3 | +33.3% |

API Cost per 1M rows | $30 | $22.50 | $7.50 savings |

Monthly Processing (10M rows) | $300 | $225 | $75 savings |

Annual Processing (120M rows) | $3,600 | $2,700 | $900 savings |

Staff-Level Insight: Why Data Hygiene Matters

We're witnessing a paradigm shift in systems architecture. For decades, storage cost and computation cost were the primary constraints. These shaped everything:

Database design prioritized compression

ETL pipelines optimized for I/O throughput

Data schemas were normalized to minimize disk footprint

Now we're entering a new era: token-bounded systems.

The constraint isn't disk space or compute cycles. It's context window. This changes how we think about data representation.

In this new paradigm, data hygiene becomes infrastructure-level concern. Not a preprocessing afterthought. Not a data validation step. Part of the fundamental architecture.

Traditional data quality focuses on:

✅ Correctness — Does the data represent what it claims?

✅ Completeness — Are required fields populated?

✅ Consistency — Is the schema enforced?

But AI-era data quality requires a new layer:

🆕 Token Density — How densely does data pack into tokens?

🆕 Context Efficiency — Does representation waste context space?

🆕 Semantic Clarity for LLMs — Can the tokenizer meaningfully process this?

Every extraneous decimal place is a tax on:

💰 Direct cost — API fees for input tokens

⏱️ Hidden cost — Inference latency from processing noise

🔊 Opportunity cost — Context space stolen from actual analysis

Why This Failure Happened

I hit the "Context Window Exceeded" error because I was operating with pre-AI-era mental models:

Database perspective: "Preserve all precision, queries will filter as needed"

Data lake perspective: "Store raw data, transformation happens at query time"

ETL perspective: "Normalize schema for consistency, don't lose information"

These are sound principles in storage-bounded systems. But they're catastrophic in token-bounded systems.

I was feeding unprocessed GPS data (with 7-decimal-place precision) directly to Claude, without asking the fundamental question: "Do I actually need this precision for my use case?"

The answer was no. But without understanding token density, I didn't realize the cost of the unnecessary precision.

Broader Strategic Implications

This optimization represents a shift in how infrastructure engineers should think about data:

Before (Storage-Bounded Era):

Question: "How do I store this efficiently?"

Answer: "Compress it, normalize it, deduplicate it"

Optimization focus: Space and I/O

Now (Token-Bounded Era):

Question: "How do I represent this such that AI systems can efficiently process it?"

Answer: "Truncate unnecessary precision, normalize for tokenization, design for signal-to-noise ratio"

Optimization focus: Token density and context efficiency

This is as fundamental a shift as the move from mainframe computing to distributed systems or from on-premise to cloud infrastructure.

Teams that understand and optimize for token density will:

Reduce API costs by 20-40%

Increase effective context capacity by similar margins

Improve model response latency

Achieve better inference quality (less noise for models to navigate)

Actionable Recommendations

If you're building production LLM systems:

1. Audit Numerical Precision

For each floating-point field in your datasets, document:

The actual precision required by domain logic

The inherited precision from your data schema

The gap between them (which is pure technical debt)

2. Implement a Data Sanitization Layer

Between your data warehouse and your LLM integration, add a transformation step that normalizes precision to business requirements:

# In your data pipeline, BEFORE serialization for LLM

df = optimize_for_llm(df, domain_precision_requirements)

3. Version Your Token Costs

Track not just API costs, but tokens-per-row metrics over time. Anomalies often signal upstream data quality degradation:

Week 1: 6.2 tokens/row

Week 2: 6.2 tokens/row

Week 3: 7.1 tokens/row ← Investigation needed

4. Baseline Tokenization Costs

Before scaling, use provider APIs to measure token consumption:

baseline_tokens = count_tokens_for_sample(test_data)

projected_monthly_cost = baseline_tokens * rows_per_month * (price_per_mtok / 1_000_000)

5. Build Feedback Loops

Monitor actual token consumption vs. estimates. Tokenization varies based on whitespace, formatting, model version updates.

Lessons for the AI Engineering Era

Data representation is now infrastructure — It's as consequential as database schema or API design

Token density is a first-class performance metric — Monitor it like you monitor CPU cycles or memory usage

Precision inherited from database schema is often technical debt — Question every decimal place

The simplest optimizations compound the most — A 25% reduction per data point × millions of rows = massive system improvement

Engineering for new constraints requires new mental models — Token-bounded thinking is fundamentally different from storage-bounded thinking

Conclusion

The "Context Window Exceeded" errors we initially interpreted as a system limitation were actually a signal: our data representation was inefficient for the new computational paradigm.

By implementing precision truncation based on actual domain requirements rather than inherited database defaults, we achieved a 33.3% improvement in effective context capacity with negligible engineering effort.

This case study represents a broader trend: as LLMs become infrastructure, the details of data representation move from the domain of data engineers to the domain of systems engineers. Your ability to understand tokenization mechanics and optimize data hygiene accordingly will become a competitive differentiator.

The most elegant optimizations often aren't code changes—they're architectural rethinking. In this case, it was recognizing that 7 decimal places of GPS precision represented technical debt inherited from a different era of computing, not a business requirement.

For teams building production LLM systems: inventory your data, understand what precision you actually need, implement sanitization layers upstream of LLM ingestion, and measure token efficiency. The gains are measurable and the implementation overhead is negligible.

The token-bounded era has arrived. Data hygiene is infrastructure. Design accordingly.