My colleagues often say I'm a bit paranoid. Not the "my solution is the best" kind of paranoia, but the "I always assume something will break" kind.

In 10 years of engineering, this habit has saved me multiple times. But this time, it almost killed us anyway.

The Cost-Cutting Initiative

A few years ago, I worked at a B2B2C food delivery platform. We were using Google Maps API to determine delivery eligibility, and it was eating up our monthly budget.

Management looked at the numbers and decided to switch to a third-party mapping service that cost one-tenth as much (I'll call it Map B).

I was assigned to validate whether this switch was feasible.

I wrote a Node.js script and designed what I called a "rolling validation plan":

Every week, the backend team would export the previous week's actual order data

The test ran for an entire month, accumulating 2,000+ real orders

Using the Haversine Formula, I calculated distance errors between Google Maps and Map B

Results showed: in metropolitan areas, 95% of orders had acceptable error margins

It looked perfect. The report went to the business team, who showed it to management. The executive director slapped the table: "Good. Let's switch."

We were all confident.

The Business Logic I Didn't Fully Grasp

Let me pause here to explain something crucial, because this detail determined how catastrophic the disaster would be.

Our system operated on a monthly SaaS rental model. Merchants paid monthly fees to rent our ordering system.

The mapping API's job was simple but critical: determine "are you within my delivery range?" Every location had strict settings, like "delivery available within 3 km" or "additional fee for 5+ km."

A wrong distance calculation wasn't just a regular app bug. It was a blocker on merchants' revenue.

Customers clearly within range couldn't place orders. The system blocked legitimate transactions. Merchants watched their phones and watched money disappear.

Then merchants would call, and they'd be loud: "I pay you thousands of dollars every month to rent your system. Your system just blocked me from taking orders. How am I compensated? Will you refund my monthly fee? Will you cover my lost revenue?"

This wasn't a customer service issue. This was financial liability.

At the time, I didn't fully understand this. Or maybe I understood it intellectually but massively underestimated its importance.

English

Fifteen Minutes After Going Live

It was 2 PM, peak traffic hours, the day of the switch.

Fifteen minutes later, I started seeing Slack messages exploding.

Not "we've saved costs" kind of news.

"Customer says address detection is wrong," "Why can't I order when I'm clearly in range," "What's happening?"

The operations manager posted customer screenshots directly. His messages had no periods—just words, raw frustration.

The Disaster Had Two Shapes

Shape One: Regular Customers Locked Out

"I see on Google Maps I'm only 2.8 km away—why is your app saying I'm over 3 km and can't order?"

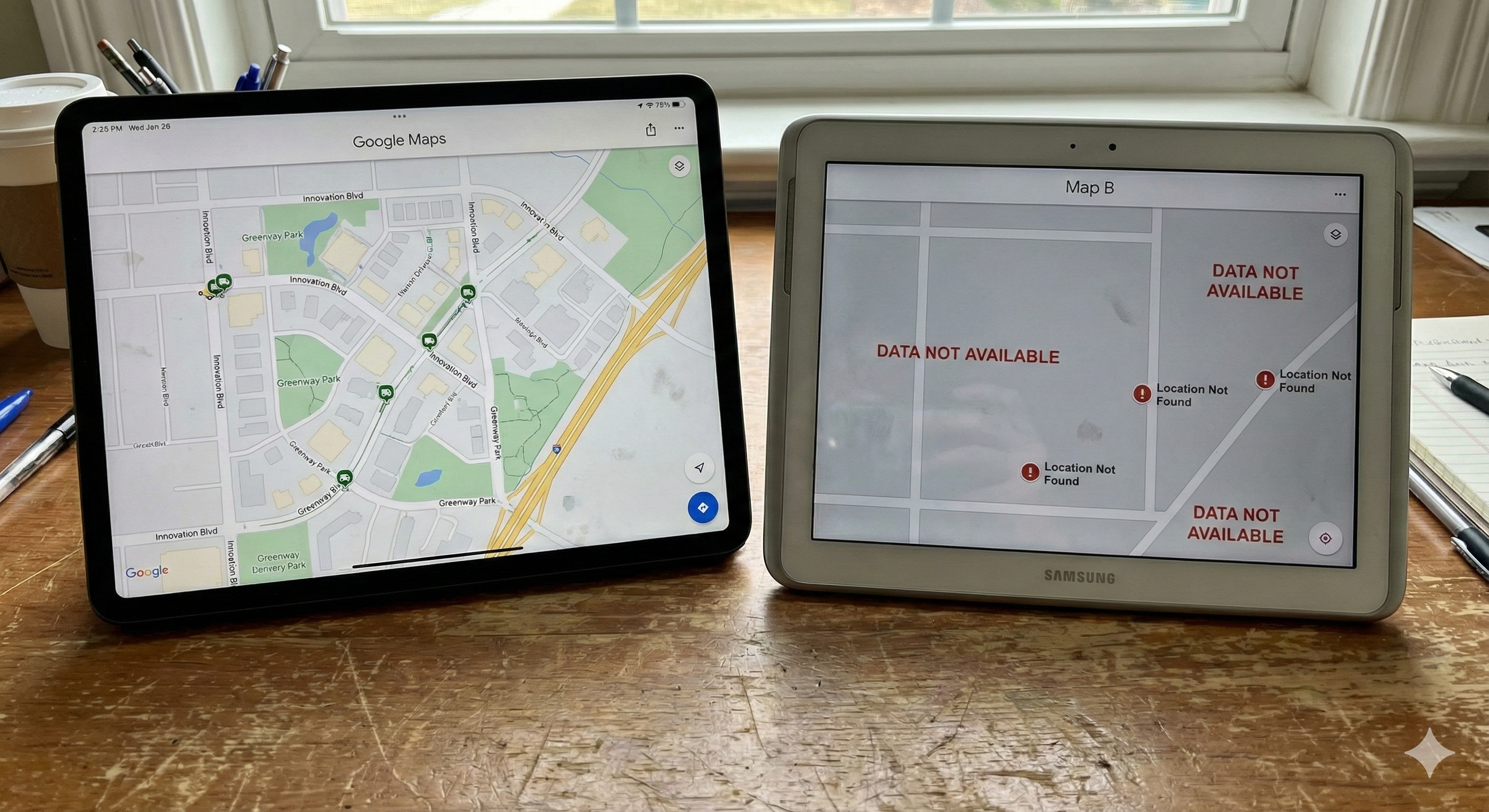

Map B had incomplete data for certain new roads. It calculated the distance as 3.2 km. Over the limit. Order blocked.

For merchants, this was a cooked duck flying away.

That afternoon, several chain restaurants called our business team directly: "If your system keeps blocking orders, we're canceling next month."

Shape Two: Systemic Shutdown

"All orders from a new development zone in Hsinchu are failing to locate—the system says we can't deliver there!"

A newly developed area. Google already had complete road networks. Map B still had nothing.

The system logic was simple: unable to calculate distance → mark as undeliverable area.

So an entire district's orders were systematically blocked by us.

Compensation Requests Started Pouring In

Customer complaints increased 400% within 30 minutes.

This wasn't just a 5% technical error. This was real money lost.

The operations director sat in the war room, looking at the compensation estimate sheet. Every line was a merchant's monthly fee reduction request.

I remember him looking up and asking, "How much do we owe?"

Nobody wanted to say that number.

That Escape Hatch

I sat at my desk, fingers on the keyboard.

My instinct wasn't "fix it fast," but "wait—do I have an exit plan?"

Thankfully, I'm the paranoid type.

When I originally implemented Map B, I'd embedded a Feature Flag in the code. Not something I thought of afterwards—I'd designed an escape route from the beginning.

// The toggle that saved us

const distanceCalculator = config.useMapB

? new MapBService()

: new GoogleMapsService();

const deliveryFee = distanceCalculator.calculate(store, customer);

One boolean value. Flipping it would instantly switch the entire distance calculation engine.

No redeployment needed. No downtime. Millisecond-level switching.

I opened the config and flipped that switch.

The system's distance calculation engine instantly switched from Map B back to Google Maps.

Customers could place orders again. Merchants' anger gradually subsided. The operations team stopped calculating compensation figures.

From total meltdown to stable: less than 45 minutes.

Why My Test Never Caught This

Later, sitting at my desk with that "95% Accurate" report in hand, I realized something stupid.

Every single order in my test had completed successfully.

But what about the orders that failed?

The ones in Hsinchu's new development zone that failed to locate. The winding alleys with weird address formats. Locations under overpasses.

These orders never made it into my test dataset. They were filtered out early in the pipeline.

I used "top-10 student grades" to predict "remedial class performance."

And I hadn't realized I was doing this.

That's survivorship bias.

During WWII, statisticians examined returning bombers to understand which areas took the most damage. They found that wings took the most hits. So they recommended reinforcing the wings.

But someone raised an objection: "We're looking at planes that came back. What about planes shot down—where were they hit?"

The part that needed reinforcement wasn't the wings. It was the fuselage.

My test dataset was like those returning bombers. I only saw "successfully completed" orders. I didn't see orders that failed at the location stage.

My Node.js validation script ran for a month with 2,000+ samples and looked very professional.

But it fundamentally couldn't see the whole picture.

The Ending Back Then: We Retreated to Our Comfort Zone

After the disaster, to prevent compensation disputes from escalating, we completely rolled back to Google Maps.

The project was shelved indefinitely. That "95% Accurate" report got buried deep on a hard drive.

We stopped the bleeding and preserved merchant relationships, but I couldn't shake a nagging feeling.

Back then, limited by my technical vision, I could only choose between "expensive precision (Google)" and "dangerous sampling (Node.js)." I lacked the capability to process a million historical orders, and I had no mechanism to instantly catch disasters like Hsinchu's new zone.

That was the limit of a Senior Engineer back then.

Three Years Later: What Would I Do Now?

Today, AI and big data technologies are unrecognizable from then.

Recently, staring at this archived project, I thought: "With today's tech stack, could I actually solve what felt unsolvable back then?"

It wasn't just about writing code better. I wanted to fix that regret.

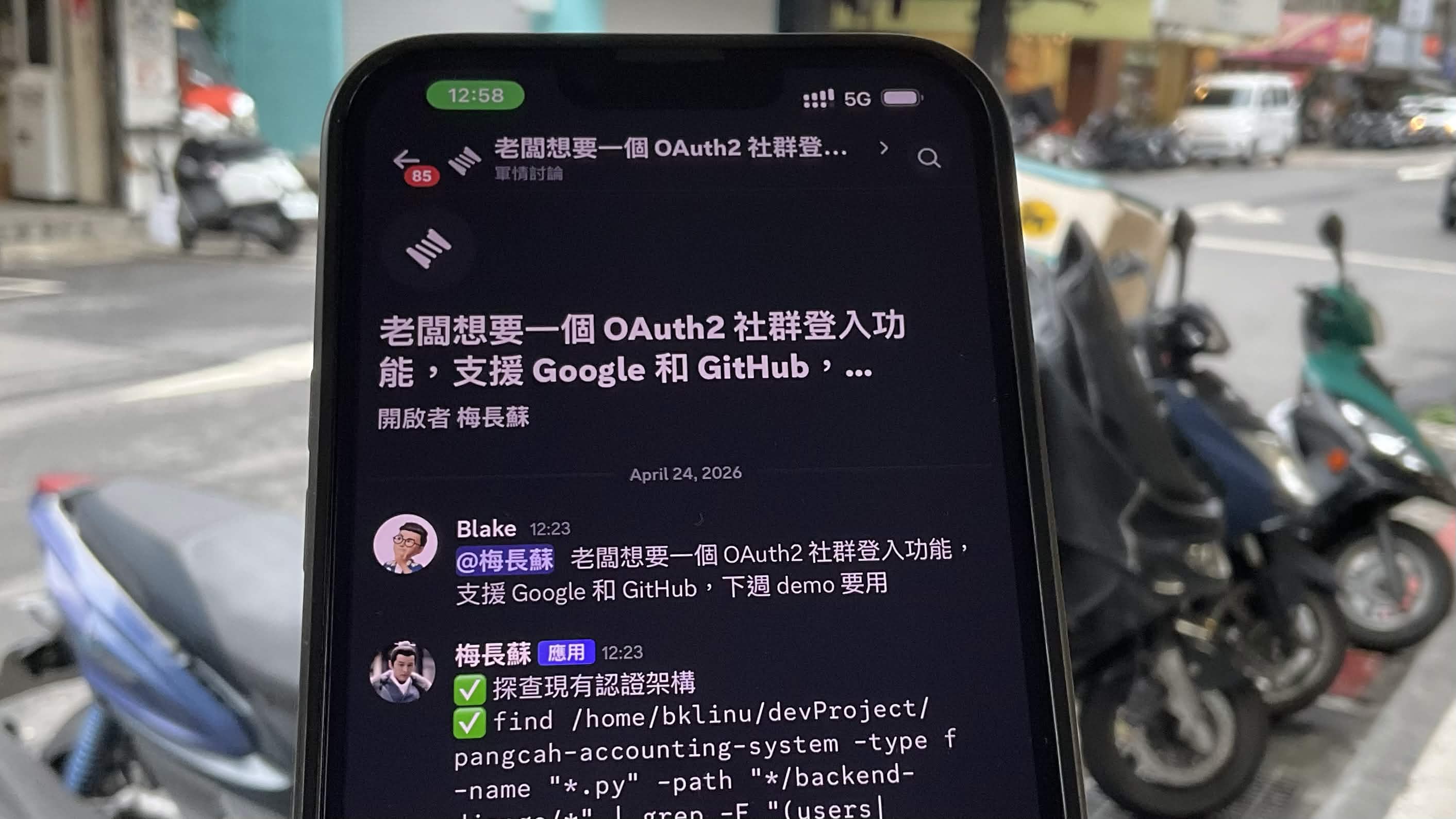

So I started a new side project to rebuild that decision system. But this time, instead of Node.js scripts, I built a complete "Intelligent Decision Matrix":

Full-Scale Data Cleansing (Apache Spark)

No more sampling 2,000 records. Using Spark, I ingest millions of historical orders and directly excavate those hidden 5% failures.

Real-Time Incident Interception (Real-time Ingestion)

Building real-time alert mechanisms. When the first location failure occurs in Hsinchu, the system doesn't wait for someone to check logs—it reacts at millisecond speed.

AI Decision Engine (LLM Analysis)

I've brought in large language models that act like a virtual operations director. Instead of looking at cold error metrics, they calculate directly: "Switching this region saves $500 in API costs, but carries $5,000 in potential compensation risk. Recommendation: don't switch."

From "Writing Code" to "Designing Systems"

For me, this side project means more than just practicing new tech.

It's a conversation across time.

Back then, I saw a performance problem ("Node.js can't handle it").

Now, I see how to use Spark + AI to solve architectural problems around "managing business liability risk."

We often ask ourselves: where's an engineer's real value?

I think it's not in whether you wrote perfect code years ago. It's in whether you later have the capacity to solve—with new perspectives—the problems that once left you helpless.

(To be continued in Part II: Rejecting Blind Testing! How I Used PySpark and LLMs to Rebuild That Mapping Decision System.)